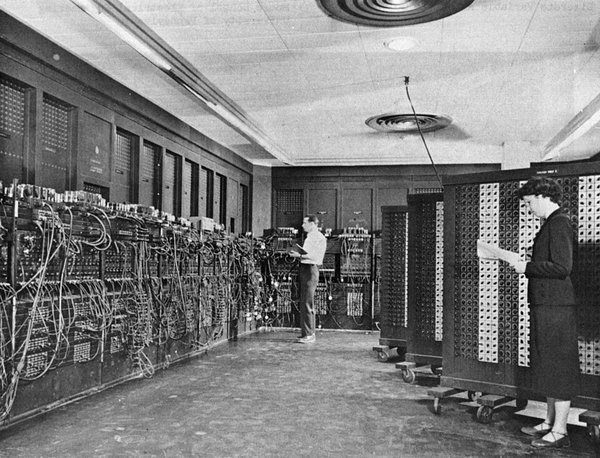

TRN vs ENIAC

Image Credit: U.S. Army Photo, Public Domain, https://commons.wikimedia.org/w/index.php?curid=55124

Power plants churn with intense fury fueling intelligent monsters with obvious scaling issues asymptotically approaching limits with dramatic rapidity. GPUs multi-threading in cyber sweat shops trying to satiate the hunger pangs of frontier generation LLMs. A Tiny Recursive Network (TRN) pokes his head in the door of the humming server warehouse and says, "Hey ENIAC, your days are numbered."

Can your CNN classifier learn CIFAR-10 with a stream of 250 random test images? Gradients explode leaving a nuclear winter of randomness while hyperparameters scatter in the blast. "I'm giving her all she's got, Captain. If I push her any harder, the whole thing will blow." TRN -> "Hold my beer."

Ladies and gentlemen, the knowledge of the World will be vectorized by MUCH smaller devices than you are currently constructing. Your effort is appreciated, and your remarkable progress has been recorded. Now, turn your focus to minitiaturization. TRNs are a start.

As humans, we learn from a stream of data. No one sits down and reviews 50,000 images trying to determine the visual differences between cats and dogs. The future of AI will be similar: 1. Build foundational knowledge through initial training; 2. Assimilate new information on the fly with reasoning and self-correction. This is the nature of life. This is how AI will live.

Alexia Jolicoeur-Martineau has performed pioneering work with TRNs (link below). These networks don't require billions of hyperparameters to learn. The future approaches. Godspeed.